Optimising ML Inference on AWS Using Amazon SageMaker

Fill form to unlock content

Error - something went wrong!

Webinar Series Australia and New Zealand

Thank you!

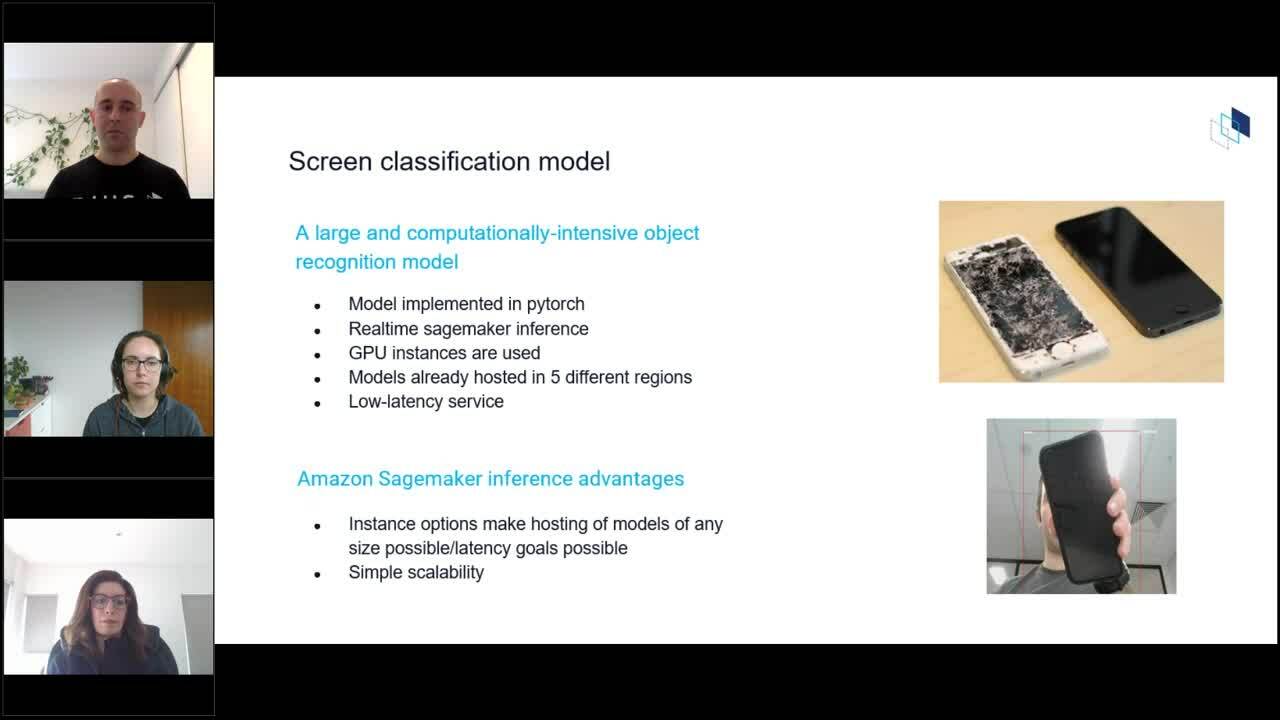

Amazon SageMaker is a fully managed machine learning (ML) service, that allows data scientists and developers to build, train, and deploy ML models for any use case, with fully managed infrastructure, tools, and workflows and reduces shadow IT when deploying ML Models. In this session, we will cover various Amazon SageMaker features with focus on its deployment capabilities. Amazon SageMaker provides multiple features to manage resources and optimise inference performance when deploying machine learning models. We will see how these features can be leveraged to deploy and manage machine learning models at scale.

Speakers:

- Mark Shoebridge, AI & ML Business Development ANZ, AWS

- Romina Sharifpour, Senior AI/ML Specialist SA, AWS

- Sara van de Moosdijk, Senior AI/ML Partner SA, AWS

- Shahin Namin, Machine Learning/Computer Vision Consultant, DiUS

Target Audience: Data Scientist, Head of Analytics, Dev Ops